Could the TEA Network Lose Data During Downtime?

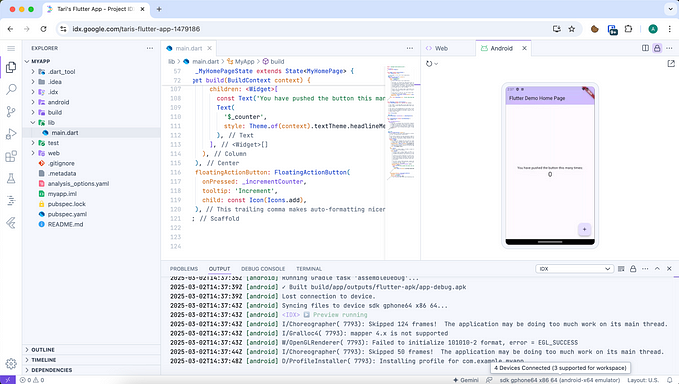

The world of centralized servers makes many assurances for customers worried about losing data during possible outages. Beyond SLA guarantees, there are fail-over servers and data backups (hopefully off-site) should a catastrophic event occur at the datacenter.

But these guarantees all depend upon you as the customer trusting a centralized company. From personal experience, I’ve lost data multiple times when hosting companies promised to have off-site backups but never really tested them in situations that would approximate any kind of emergency.

That’s why the TEA Project is moving beyond having to trust anyone and moving Web3 to a compute infrastructure that has trust baked in.

This level of trust being designed directly into the system allows for new ways of securing data that the world hasn’t seen yet. For example, we often use HTTP instead of HTTPS in our ecosystem (such as when nodes host TApps) because HTTPS only protects data in transport. In the TEA ecosystem, we assume the server’s already compromised and make sure the system is secure even in that instance.

This also extends to our new model of code traveling to the data which allows for a groundbreaking level of security for private data. Home consumers can start using Web3 gateways to secure their family’s data and fetch apps to only interact with their data in the home.

How Resilient is the TEA Network During Network Disruption?

The TEA Project is building a decentralized computing infrastructure that’s resilient to network outages and targeted attacks. The TEA network’s resiliency owes a great deal to its decentralized nodes, but let’s walk through a few disaster scenarios to see what kind (if any) ofdata loss could happen.

The most numerous nodes in our ecosystem are the hosting nodes, and they don’t store any permanent data as they host the platform’s dApps. They basically act in a manner similar to the purely functional AWS Lambda compute instances. As the TEA Project mining infrastructure is decentralized, other nodes will take over a task should any one node go down. When a hosting node suddenly goes down, it will cause the clients connected to this node to lose connection. But the front end algorithm will automatically connect to the next active hosting node (the list comes from a query to the underlying layer1 — ETH, BSC etc.).

So there’s no real data loss possibility for the hosting nodes that host the dApps. This is in part to the decentralized nature of the nodes — one node goes down and another takes its place — but it’s also from the simple fact that hosting nodes don’t host any data in the traditional cloud computing sense. Let’s now turn our attention to the different possible disruption cases for global nodes that host the database layer and the state machine.

For the global nodes that run the state machine and the database, as long as more than 2 of these global nodes are alive they can reach consensus on the current state. If there’s only 1 node left online, there’ll be no more consensus (the state will not update as no new transaction can be handled) but also no data loss. All the unhandled transactions will be stuck in the transaction pool (we call it a conveyor). As long as a new node gets back online, the consensus can be reached, and then those transactions can be handled so that the state machine continues to update. The state machine nodes regularly save an encrypted data snapshot stored on IPFS. As long as there’s at least 1 global node available it will continue to save these snapshots. If it ever occurs that there are 0 global nodes online, then the system will be dormant until another global CML is able to come back online and restore their latest snapshot from IPFS.

But as you can see from playing out the various contingencies, no data is ever lost. The only way data could be lost is if 1) the underlying layer1 (e.g. Ethereum) stops working or 2) IPFS itself stops working or 3) all global nodes are physically destroyed so that no TPM (which owns the private key) can restore the stored encrypted state from the IPFS backup.